How product development benefits from flow - Part 2

To quickly recap, this is a two-part series, where I am exploring the benefits of flow for (software) product development from two angles:

Part 1: the matter of foundation, the pragmatic and effective way to ensure respect to flow.

Part 2: the matter of leverage, how that tends to increase the likelihood of achieving the things that matter most (the outcomes that drive impact) – focus of this second and last part.

In the first part, I have articulated an underlying principle (taking a system thinking lens):

The matter of foundation:

Any system to deliver products, to improve, can benefit from both having a constrained load of work and that leads to shorter feedback loops (i.e., faster learning).

And I have also proposed a simple game to simulate and explore the implications of choices we make while executing. Particularly from the perspective of focus, where WIP limits functions as possibly the simplest most underrated advice to get there (create focus).

Before we get to the essence of Part 2 (the matter of leverage) though, I thought it could be good to illustrate a play of the simulation game, and with that highlight some of the implications.

Let's play: illustrating a WIP simulation game

We will use a tweaked version of the example of game setup shared in part 1:

Resources: a pair of dice, (Excel) spreadsheet on a laptop.

Scenarios: (A) 1 deliverable WIP at a time; (B) 4 deliverables WIP in parallel

Decisions:

How much progress to be made:

How many items to divide progress against:

Simplified: progress is always divided equally across all (non-blocked) items WIP

Whether any top-most item(s) are to be considered blocked:

Option: 2 out 6 chance for Scenario A being idle

Here's the snapshot of the simulation:

And here's the consolidation of the outcome:

Some observations can be derived from the simulation:

It took almost 5x more iterations for one deliverable to be finished by Scenario B compared to Scenario A simulation, and Scenario B's first finishing is not the top priority.

It took 33% more iterations for Scenario B to throughput 3 items (compared to Scenario A), and still none of them is the top priority.

It turned out that both Scenarios would have finished all the 4 items at the same time (iteration 18), and only then Scenario B finished the top priority, which meant 6x more iterations were needed by Scenario B to throughput the top priority.

Pattern of delivery for Scenario A is smooth, incremental, with an item being finished every 3-6 rounds, while for Scenario B is concentrated, as a big batch towards the end.

All of that with a bias towards Scenario B in which capacity never goes fully waste, while Scenario A was idle for 17% of the time.

Key takeaway: If all we cared about was whether the 4 items could be finished before the available 20 iterations, on the surface the Scenarios are comparable. But that would miss a couple of fundamental points. For example, the fact that Scenario A delivered the items with nearly 20% of idle time (a slack that could be productively used for other relevant purposes). Or the potential increased risk of delivering everything towards the end of the available period (i.e., longer/delayed feedback loops). This is only purely from a flow standpoint, and obviously that's not the only factor at play.

Now, since we stated from the design of the game that the deliverables were prioritized, there should be some underlying reason for it. To my knowledge, one of the most useful ways to go about that is to use a frame of risk or loss of opportunity (thanks, Don Reinertsen), which can be modeled as Cost of Delay (CoD). Let's imagine, and to put in perspective, that we had a good enough estimation for each of the deliverables we targeted in the simulation:

Deliverable 1: 4,000 "moneys"/iteration

Deliverable 2: 3,000 "moneys"/iteration

Deliverable 3: 2,000 "moneys"/iteration

Deliverable 4: 1,000 "moneys"/iteration

Then the result of the each of the scenarios simulated were:

Scenario A CoD: 68,000 "moneys" (i.e., 55% better!)

Scenario A CoD: 151,000 "moneys"

Naturally, the above are relatively simplified notions, and the reality of product development is often more complex than that.

At the most basic level, for as good as our attempt to quantify the opportunity loss (like with CoD), we can't know for sure whether the impact will turn out as we expected (or estimated).

Plus there are other factors at play, like the fact that loss of opportunity functions (like modeled with CoD) are not necessarily linear as in the simple example I've used. That brings me full circle back to the essence of the intent of this piece.

There's one more thing – the matter of leverage

Let's take a small step back to summarize the principle of part 1 (the matter of foundation) and reframe it with the goal of product development in mind. The best definition I know of what product development is about is captured in this short video by Jeff Patton (create of Story Mapping):

Product development is about changing the world (in the sense of solving a problem or customer need), and do that by maximizing impact (gains) while minimizing output (cost).

The challenge there being precisely how to find leverage to do that (maximize impact while minimizing output) in a context of inherent complexity. That alone would tend to favor any attempt that favors flow (smooth, incremental delivery that leads into shorter feedback loops, thus promotes faster learning). With a product lens on, we can bring it all together with a little "system" that can look something like this.

There's more though… Which just makes it more appealing. As we started exploring in the initial section with the simulated game, chances are that by delivering faster (or smoother, more incrementally) some tangible outcomes (even with associated "moneys") can be unlocked sooner, when framed from an opportunity loss standpoint (like using CoD). This can be an input also for decisions around priority (selection, sequencing, scheduling…).

But I think I can almost hear some circling back to my point on inherent complexities, and that often we can't know whether there will be an impact or by what extent. And you'd be right for pointing that out. That's still just yet another reason for leaning towards "going around" (in the product delivery system above) faster and with good pacing (smooth flow).

There's one more angle worth exploring though; the fact that not always the opportunity loss behaves in a regular linear way (contrary to the simplified example). The cost of delay might as well have other archetypes, such as:

An "intangible risk profile": it barely costs you anything until it might and with an exponential growth (e.g., so-called technical debt are typically good examples of this). By the way, here's a good use of some "slack" you might have in the system for not overloading it with too much WIP (and being perhaps temporarily idle for some blockage, or whatnot).

.

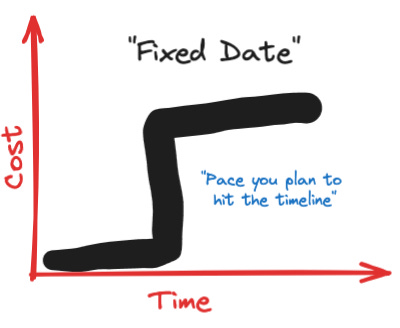

Or a "fixed data risk profile": one-off opportunity linked to an event with a specific timing. The point here being that it can be seen as a waste if you ready too early.

The point really being that those nuances and intricacies require proper tactics in terms of managing the flow of work. The implication being that the *idealistic* scenario of always doing things one by one might not be necessarily the pragmatic thing to do. That just makes managing flow an ongoing active need, so to ensure sustainable well-paced delivery taking a holistic view on what managing the products means.

Thus, an underlying principle could be articulate as something like:

The matter of leverage:

A product delivery "system", to increase its chances to be effective ("maximize impact while minimizing output"), and do it sustainably, can find leverage by building on a foundation of shortening feedback loops (thus increasing pace of learning) and actively managing flow of work taking an "optionality" perspective (opportunity loss) that also takes a holistic* view of what it means to manage a product.

"What's in it for you" (or your product) may range from:

Improved chances to focus on valuable things enabled by faster/better learning.

Possibility to unlock tangible outcomes faster (smoother and more incremental delivery).

Increased chances to deliver sustainably by actively managing flow with a holistic* view.

*The metaphor I like to use on what is meant with this "holistic view of managing a product" is "gardening" (in the sense that is not only what you do directly to the plants as such that matters, but also keeping the environment around fit for purpose).

By Rodrigo Sperb, feel free to connect, I'm happy to engage and interact. If I can be of further utility to you or your organization in getting better at working with product development, I am available for part-time advisory, consulting or contract-based engagements.